Ok let’s give a little bit of context. I will turn 40 yo in a couple of months and I’m a c++ software developer for more than 18 years. I enjoy to code, I enjoy to write “good” code, readable and so.

However since a few months, I become really afraid of the future of the job I like with the progress of artificial intelligence. Very often I don’t sleep at night because of this.

I fear that my job, while not completely disappearing, become a very boring job consisting in debugging code generated automatically, or that the job disappear.

For now, I’m not using AI, I have a few colleagues that do it but I do not want to because one, it remove a part of the coding I like and two I have the feeling that using it is cutting the branch I’m sit on, if you see what I mean. I fear that in a near future, ppl not using it will be fired because seen by the management as less productive…

Am I the only one feeling this way? I have the feeling all tech people are enthusiastic about AI.

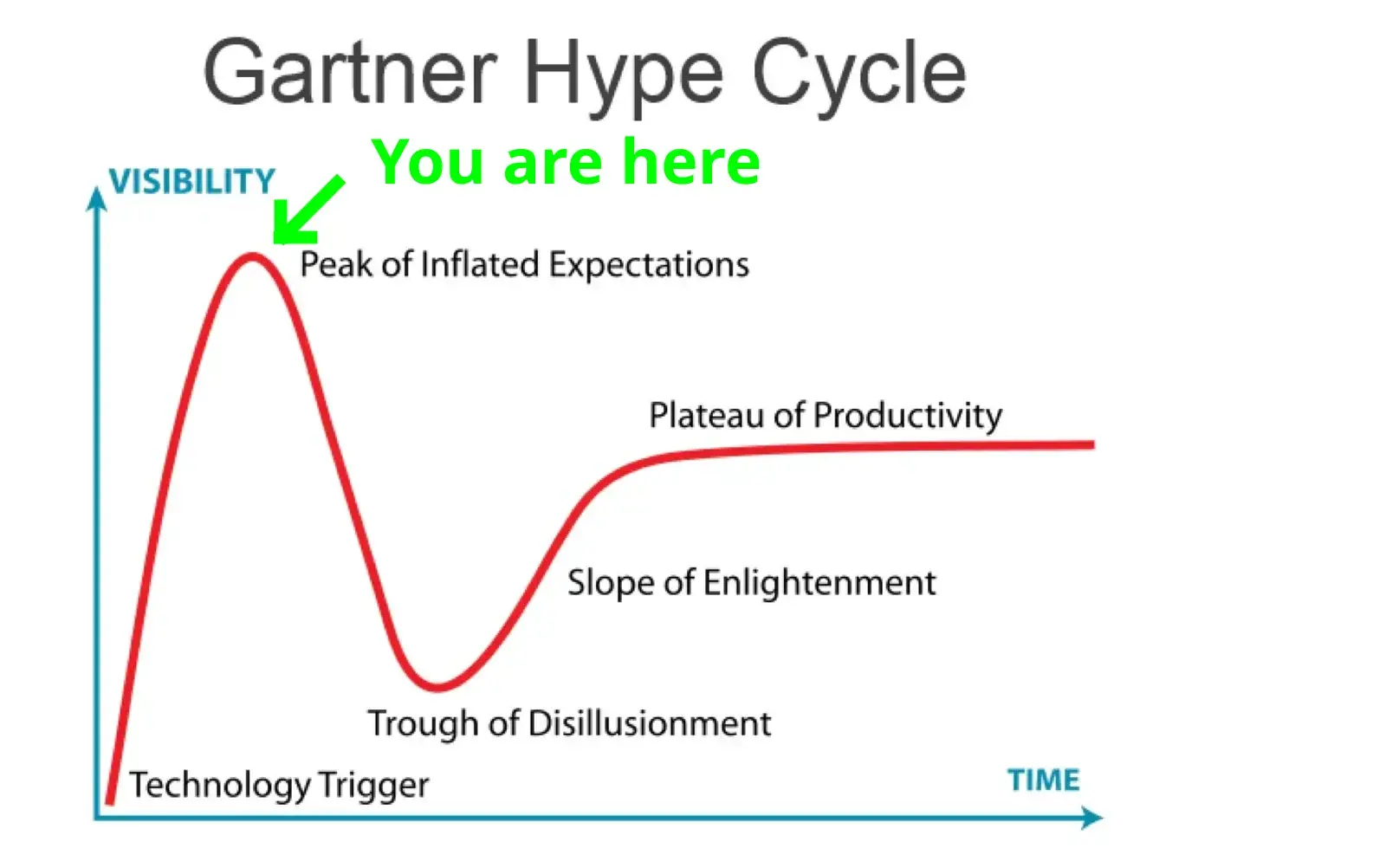

The trough of disillusionment is my favorite.

Kind of nice to see NFTs breaking through the floor at the trough of disillusionment, never to return.

Betteridge’s law of headlines: No.

Currently at the crossroads between trough of disillusionment and slope of enlightenment

I’m not worried about AI replacing employees

I’m worried about managers and bean counters being convinced that AI can replace emplpyees

It’ll be like outsourcing all over again. How many companies outsourced then walked back on it several years later and only hire in the US now? It could be really painful short term if that happens (if you consider severeal years to a decade short term).

Given the degree to which first-level customer service is required to stick to a script, I could see over half of call centers being replaced by LLMs over the next 10 years. The second level service might still need to be human, but I expect they could be an order of magnitude smaller than the first tier.

They’re supposed to be on script but customers veer off the script constantly. They would be extremely annoyed to be talking to AI. Not that it would stop some companies but it would be terrible customer service.

That’s what tier 2 service would be for. But the vast majority of calls are people wanting to execute a simple order or transaction, or ask a silly question they could have googled.

If your problem can be solved by a bot, and it means you can be done immediatelu and don’t need to be on hold for 20m+ waiting for t2 support, you’re going to prefer it.

Also, we’ve come a long way in just 2-3 years. It will be very difficult for us to talk about how good the experience will be in 5-10 years.

If your problem can be solved by a bot, then an old fashioned touch-tone phone menu would be an entirely sufficient solution, no “AI” needed.

If not, then plugging an LLM into your IVR will never be worth the expense since the customer will need to talk to a human anyway.

“AI” is a bubble. Sure, it might have some niche applications where its viable, but it’s heavily overpromised and due for disinvestment this year.

And yet, we don’t use touch-tone menus, bots that suck are already commonplace. An LLM bot could stand to dramatically improve the user experience, and would probably use the same resources that the current bots do.

Simple things like “I want to fill a prescription” or “I want to schedule a technician” or “do you have blah in stock” could be orchestrated by a bot that sounds human, and people would prefer that to traversing a directory tree for 10m.

I don’t even want to think about how someone would implement a customer facing inventory query using a touch-tone interface, let alone use that.

I fail to see how adding an LLM to an IVR could improve that situation. Keywords like “fill perscription”, “schedule technician”, and “do you have [blank] in stock” are already present and don’t need any kind of text generation to shunt a caller into the appropriate queue or run a query on a warehouse database.

Where, exactly, do you think an LLM could contribute other than, like, a computer generated bedtime story hotline or something?

I was a supervisor of a call center up until recently and yea, this is definitely coming. It’s was already to the point where they were arguing with me about hiring enough people because soon we’ll have an AI solution to take a lot of the calls. You can already see it in the chat bots coming out.

Do you know any examples of which companies that have done this? I’m not asking to be facetiois, just genuinely curious.

The one I work for did this years ago before I worked here. I already have enough personal info about myself out there so not gonna name the company lol I know I’ve seen that at other companies too.

Copilot is just so much faster than me at generating code that looks fancy and also manages to maximize the number of warnings and errors.

That will happen. And if they’re wrong, they’ll crash and burn. That’s how tech bubbles burst.

Clearly my main concern… But after reading a lot of reinsuring comments, I’m more and more convinced that human will always be superior

This is their only retaliation for the fact that managers have already been replaced by git tools and CI.

There’s a massive amount of hype right now, much like everything was blockchains for a while.

AI/ML is not able to replace a programmer, especially not a senior engineer. Right now I’d advise you do your job well and hang tight for a couple of years to see how things shake out.

(me = ~50 years old DevOps person)

I’m only on my very first year of DevOps, and already I have five years worth of AI giving me hilarious, sad and ruinous answers regarding the field.

I needed proper knowledge of Ansible ONCE so far, and it managed to lie about Ansible to me TWICE. AI is many things, but an expert system it is not.

Well, technically “expert system” is a type of AI from a couple of decades ago that was based on rules.

Great advice. I would add to it just to learn leveraging those tools effectively. They are great productivity boost. Another side effect once they become popular is that some skills that we already have will be harder to learn so they might be in higher demand.

Anyway, make sure you put aside enough money to not have to worry about such things 😃

So, I asked Chat GPT to write a quick PowerShell script to find the number of months between two dates. The first answer it gave me took the number of days between them and divided by 30. I told it, it needs to be more accurate than that, so it wrote a while loop to add 1 months to the first date until it was larger than the 2 second date. Not only is that obviously the most inefficient way to do it, but it had no checks to ensure the one in the loop was actually smaller so you could just end up with zero. The results I got from co-pilot were not much better.

From my experience, unless there is existing code to do exactly what you want, these AI are not to the level of an experienced dev. Not by a long shot. As they improve, they’ll obviously get better, but like with anything you have to keep up and adapt in this industry or you’ll get left behind.

The thing is that you need several AIs. One to write the question so the one who codes gets the question you want answered. The. A third one who will write checks and follow up on the code written.

When ran in a feedback loop like this, the quality you get out will be much higher than just asking chathpt to make something

This is the idea behind things like “chain of thought” and “tree of thought”.

Removed by mod

This is a real danger in a long term. If advancement of AI and robotics reaches a certain level, it can detach big portion of lower and middle classes from the societys flow of wealth and disrupt structures that have existed since the early industrial revolution. Educated common man stops being an asset. Whole world becomes a banana republic where only Industry and government are needed and there is unpassable gap between common people and the uncaring elite.

Right. I agree that in our current society, AI is net-loss for most of us. There will be a few lucky ones that will almost certainly be paid more then they are now, but that will be at the cost of everyone else, and even they will certainly be paid less then the share-holders and executives. The end result is a much lower quality of life for basically everyone. Remember what the Luddites were actually protesting and you’ll see how AI is no different.

This is exactly what I see as the risk. However, the elites running industry are, on average, fucking idiots. So, we have been seeing frequent cases of them trying to replace people whose jobs they don’t understand, with technology that even leading scientists don’t fully understand, in order to keep those wages for themselves, all in-spite of those who do understand the jobs saying that it is a bad idea.

Don’t underestimate the willingness of upper management to gamble on things and inflict the consequences of failure on the workforce. Nor their willingness to switch to a worse solution, not because it is better or even cheaper but because it means giving less to employees, if they think that they can get away with it.

White collar never should have been getting paid so much more than blue collar and I welcome seeing the Shift balance out, so everyone wants to eat the rich.

White collar never should have been getting paid so much more than blue collar

Actually I see that the other way around. Blue collar should have never been paid so much less than white collar.

Rich will have weapons and technology. I see 1984 + hunger games scenario more likely.

I’m in IT and I don’t believe this will happen for quite a while if at all. That said I wouldn’t let this keep you up at night, it’s out of your control and worrying about it does you no favours. If AI really can replace people then we are all in this together and we will figure it out.

AI is a really bad term for what we are all talking about. These sophisticated chatbots are just cool tools that make coding easier and faster, and for me, more enjoyable.

What the calculator is to math, LLM’s are to coding, nothing more. Actual sci-fi style AI, like self aware code, would be scary if it was ever demonstrated to even be possible, which it has not.

If you ever have a chance to use these programs to help you speed up writing code, you will see that they absolutely do not live up to the hype attributed to them. People shouting the end is nigh are seemingly exclusively people who don’t understand the technology.

I’ve never had to double check the results of my calculator by redoing the problem manually, either.

Yeah, this is the thing that always bothers me. Due to the very nature of them being large language models, they can generate convincing language. Also image “ai” can generate convincing images. Calling it AI is both a PR move for branding, and an attempt to conceal the fact that it’s all just regurgitating bits of stolen copywritten content.

Everyone talks about AI “getting smarter”, but by the very nature of how these types of algorithms work, they can’t “get smarter”. Yes, you can make them work better, but they will still only be either interpolating or extrapolating from the training set.

Haven’t we started using AGI, or artificial general intelligence, as the term to describe the kind of AI you are referring to? That self aware intelligent software?

Now AI just means reactive coding designed to mimic certain behaviours, or even self learning algorithms.

That’s true, and language is constantly evolving for sure. I just feel like AI is a bit misleading because it’s such a loaded term.

I get what you mean, and I think a lot of laymen do have these unreasonable ideas about what LLMs are capable of, but as a counter point we have used the label AI to refer to very simple bits of code for decades eg video game characters.

AI is the correct term. It’s the name of the field of study and anything that mimics intelligence is an AI.

Neural networks are a perfect example of an AI. What you actually code is very simple. A bunch of nodes that pass numbers forward through the system applying weights to the values. Their capabilities once trained far outstretch the simple code they run and seem intelligent.

What you are referring to is general AI.

It’s a misnomer, but if you want to pass off LLMs as “artificial intelligence” on technicality of definition, you’d also have to include

advanced web search engines (e.g., Google Search), recommendation systems (used by YouTube, Amazon, and Netflix)

etc.

Indeed you do.

Neural networks are some of the original AIs.

Yes those are also examples of AI, see relevant Wikipedia article:

AI is whatever hasn’t been done yet

We need better terms to specify exactly what we mean, e.g. a numeric scale of intelligence or maybe even something more complex like a radar chart.

I use AI heavily at work now. But I don’t use it to generate code.

I mainly use it instead of googling and skimming articles to get information quickly and allow follow up questions.

I do use it for boring refactoring stuff though.

In its current state it will never replace developers. But it will likely mean you need less developers.

The speed at which our latest juniors can pick up a new language or framework by leaning on LLMs is quite astounding. It’s definitely going to be a big shift in the industry.

At the end of the day our job is to automate things so tasks require less staff. We’re just getting a taste of our own medicine.

I mainly use it instead of googling and skimming articles to get information quickly and allow follow up questions.

I do use it for boring refactoring stuff though.

Those are also the main uses cases I use it for.

Really good for getting a quick overview over a new topic and also really good at proposing different solutions/algorithms for issues when you describe the issue.

Doesn’t always respond correctly but at least gives you the terminology you need to follow up with a web search.

Also very good for generating boilerplate code. Like here’s a sample JSON, generate the corresponding C# classes for use with System.Text.Json.JsonSerializer.

Hopefully the hardware requirements will come down as the technology gets more mature or hardware gets faster so you can run your own “coding assistant” on your development machine.

That’s been my experience as well, it’s faster to write a query for a model than to google and go through bunch of blogs or stackoverflow discussions. It’s not always right, but that’s also true for stuff you find online. The big advantage is that you get a response tailored to what you’re actually trying to do, and like you said, if it’s incorrect at least now you know what to look for.

And you can run pretrained models locally already if you have a relatively beefy machine. FauxPilot is an example. I imagine in a few years running local models is going to become a lot more accessible.

As an example:

Salesforce has been trying to replace developers with “easy to use tools” for a decade now.

They’re no closer than when they started. Yes the new, improved flow builder and omni studio look great initially for the simple little preplanned demos they make. But theyre very slow, unsafe to use and generally are impossible to debug.

As an example: a common use case is: sales guy wants to create an opportunity with a product. They go on how omni studio let’s an admin create a set of independently loading pages that let them:

• create the opportunity record, associating it with an existing account number.

• add a selection of products to it.But what if the account number doesn’t exist? It fails. It can’t create the account for you, nor prompt you to do it in a modal. The opportunity page only works with the opportunity object.

Also, if the user tries to go back, it doesn’t allow them to delete products already added to the opportunity.

Once we get actual AIs that can do context and planning, then our field is in danger. But so long as we’re going down the glorified chatbot route, that’s not in danger.

I think all jobs that are pure mental labor are under threat to a certain extent from AI.

It’s not really certain when real AGI is going to start to become real, but it certainly seems possible that it’ll be real soon, and if you can pay $20/month to replace a six figure software developer then a lot of people are in trouble yes. Like a lot of other revolutions like this that have happened, not all of it will be “AI replaces engineer”; some of it will be “engineer who can work with the AI and complement it to be produtive will replace engineer who can’t.”

Of course that’s cold comfort once it reaches the point that AI can do it all. If it makes you feel any better, real engineering is much more difficult than a lot of other pure-mental-labor jobs. It’ll probably be one of the last to fall, after marketing, accounting, law, business strategy, and a ton of other white-collar jobs. The world will change a lot. Again, I’m not saying this will happen real soon. But it certainly could.

I think we’re right up against the cold reality that a lot of the systems that currently run the world don’t really care if people are taken care of and have what they need in order to live. A lot of people who aren’t blessed with education and the right setup in life have been struggling really badly for quite a long time no matter how hard they work. People like you and me who made it well into adulthood just being able to go to work and that be enough to be okay are, relatively speaking, lucky in the modern world.

I would say you’re right to be concerned about this stuff. I think starting to agitate for a better, more just world for all concerned is probably the best thing you can do about it. Trying to hold back the tide of change that’s coming doesn’t seem real doable without that part changing.

It’s not really certain when real AGI is going to start to become real, but it certainly seems possible that it’ll be real soon

What makes you say that? The entire field of AI has not made any progress towards AGI since its inception and if anything the pretty bad results from language models today seem to suggest that it is a long way off.

You would describe “recognizing handwritten digits some of the time” -> “GPT-4 and Midjourney” as no progress in the direction of AGI?

It hasn’t reached AGI or any reasonable facsimile yet, no. But up until a few years ago something like ChatGPT seemed completely impossible, and then a few big key breakthroughs happened, and now the impossible is possible. It seems by no means out of the question that a few more big breakthroughs could happen with AGI, especially with as much attention and effort is going into the field now.

It’s not that machine learning isn’t making progress, it’s just many people speculate that AGI will require a different way of looking at AI. Deep Learning, while powerful, doesn’t seem like it can be adapted to something that would resemble AGI.

You mean, it would take some sort of breakthrough?

(For what it’s worth, my guess about how it works is to generally agree with you in terms of real sentience – just that I think (a) neither one of us really knows that for sure (b) AGI doesn’t require sentience; a sufficiently capable fakery which still has limitations can still upend the world quite a bit).

Yes, and most likely more of a paradigm shift. The way deep learning models work is largely around static statistical models. The main issue here isn’t the statistical side, but the static nature. For AGI this is a significant hurdle because as the world evolves, or simply these models run into new circumstances, the models will fail.

Its largely the reason why autonomous vehicles have sorta hit a standstill. It’s the last 1% (what if an intersection is out, what if the road is poorly maintained, etc.) that are so hard for these models as they require “thought” and not just input/output.

LLMs have shown that large quantities of data seem to approach some sort of generalized knowledge, but researchers don’t necessarily agree on that https://arxiv.org/abs/2206.07682. So if we can’t get to more emergent abilities, it’s unlikely AGI is on the way. But as you said, combining and interweaving these systems may get something close.

a sufficiently capable fakery which still has limitations can still upend the world quite a bit

Maybe but we are essentially throwing petabyte sized models and lots of compute power at it and the results are somewhere on the level where a three year old would do better in not giving away that they don’t understand what they are talking about.

Don’t get me wrong, LLMs and the other recent developments in generative AI models are very impressive but it is becoming increasingly clear that the approach is maybe barely useful if we throw about as many computing resources at it as we can afford, severely limiting its potential applications. And even at that level the results are still so bad that you essentially can’t trust anything that falls out.

This is very far from being sufficient to fake AGI and has absolutely nothing to do with real AGI.

I’m both unenthusiastic about A.I. and unafraid of it.

Programming is a lot more than writing code. A programmer needs to setup a reliable deployment pipeline, or write a secure web-facing interface, or make a useable and accessible user interface, or correctly configure logging, or identity and access, or a million other nuanced, pain-in-the-ass tasks. I’ve heard some programmers occasionally decrypt what the hell the client actually wanted, but I think that’s a myth.

The history of automation is somebody finds a shortcut - we all embrace it - we all discover it doesn’t really work - someone works their ass off on a real solution - we all pay a premium for it - a bunch of us collaborate on an open shared solution - we all migrate and focus more on one of the 10,000 other remaining pain-in-the-ass challenges.

A.I. will get better, but it isn’t going to be a serious viable replacement for any of the real work in programming for a very long time. Once it is, Murphy’s law and history teaches us that there’ll be plenty of problems it still sucks at.

They haven’t replaced me with cheaper non-artifical intelligence yet and that’s leaps and bounds better than AI.

Yeah, the real danger is probably that it will be harder for junior developers to be considered worth the investment.

If you are afraid about the capabilities of AI you should use it. Take one week to use chatgpt heavily in your daily tasks. Take one week to use copilot heavily.

Then you can make an informed judgement instead of being irrationally scared of some vague concept.

Yeah, not using it isn’t going to help you when the bottom line is all people care about.

It might take junior dev roles, and turn senior dev into QA, but that skillset will be key moving forward if that happens. You’re only shooting yourself in the foot by refusing to integrate it into your work flows, even if it’s just as an assistant/troubleshooting aid.

It’s not going to take junior dev roles) it’s going to transform whole workflow and make dev job more like QA than actual dev jobs, since difference between junior middle and senior is often only with scope of their responsibility (I’ve seen companies that make junior do fullstack senior job while on the paper they still was juniors and paycheck was something between junior and middle dev and these companies is majority in rural area)

I’m a composer. My facebook is filled with ads like “Never pay for music again!”. Its fucking depressing.

Good thing there’s no Spotify for sheet music yet… I probably shouldn’t give them ideas.

Programming is the most automated career in history. Functions / subroutines allow one to just reference the function instead of repeating it. Grace Hopper wrote the first compiler in 1951; compilers, assemblers, and linkers automate creating machine code. Macros, higher level languages, garbage collectors, type checkers, linters, editors, IDEs, debuggers, code generators, build systems, CI systems, test suite runners, deployment and orchestration tools etc… all automate programming and programming-adjacent tasks, and this has been going on for at least 70 years.

Programming today would be very different if we still had to wire up ROM or something like that, and even if the entire world population worked as programmers without any automation, we still wouldn’t achieve as much as we do with the current programmer population + automation. So it is fair to say automation is widely used in software engineering, and greatly decreases the market for programmers relative to what it would take to achieve the same thing without automation. Programming is also far easier than if there was no automation.

However, there are more programmers than ever. It is because programming is getting easier, and automation decreases the cost of doing things and makes new things feasible. The world’s demand for software functionality constantly grows.

Now, LLMs are driving the next wave of automation to the world’s most automated profession. However, progress is still slow - without building massive very energy expensive models, outputs often need a lot of manual human-in-the-loop work; they are great as a typing assist to predict the next few tokens, and sometimes to spit out a common function that you might otherwise have been able to get from a library. They can often answer questions about code, quickly find things, and help you find the name of a function you know exists but can’t remember the exact name for. And they can do simple tasks that involve translating from well-specified natural language into code. But in practice, trying to use them for big complicated tasks is currently often slower than just doing it without LLM assistance.

LLMs might improve, but probably not so fast that it is a step change; it will be a continuation of the same trends that have been going for 70+ years. Programming will get easier, there will be more programmers (even if they aren’t called that) using tools including LLMs, and software will continue to get more advanced, as demand for more advanced features increases.

AI powered spreadsheets are going to be the next big technology for programmers :D