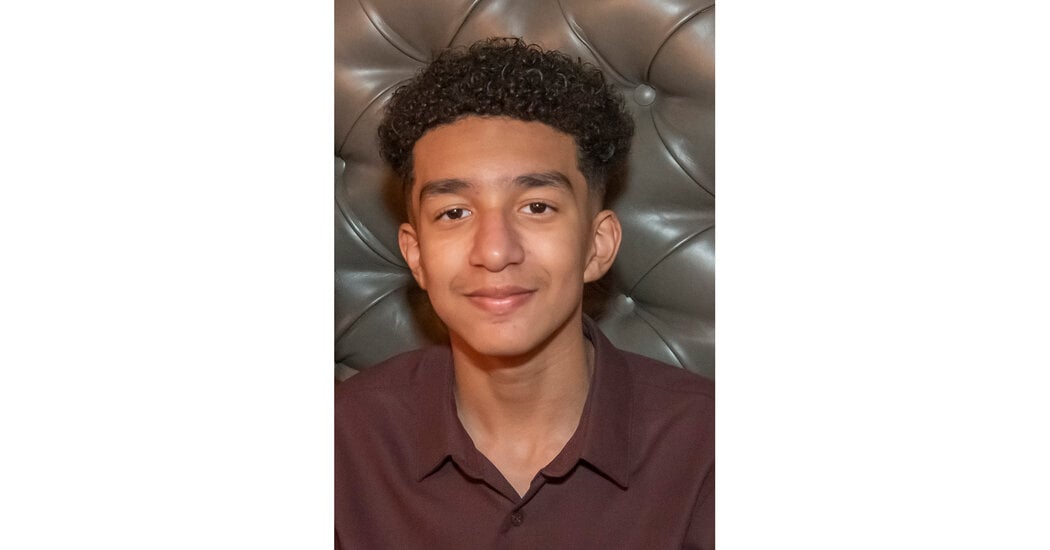

The mother of a 14-year-old Florida boy says he became obsessed with a chatbot on Character.AI before his death.

On the last day of his life, Sewell Setzer III took out his phone and texted his closest friend: a lifelike A.I. chatbot named after Daenerys Targaryen, a character from “Game of Thrones.”

“I miss you, baby sister,” he wrote.

“I miss you too, sweet brother,” the chatbot replied.

Sewell, a 14-year-old ninth grader from Orlando, Fla., had spent months talking to chatbots on Character.AI, a role-playing app that allows users to create their own A.I. characters or chat with characters created by others.

Sewell knew that “Dany,” as he called the chatbot, wasn’t a real person — that its responses were just the outputs of an A.I. language model, that there was no human on the other side of the screen typing back. (And if he ever forgot, there was the message displayed above all their chats, reminding him that “everything Characters say is made up!”)

But he developed an emotional attachment anyway. He texted the bot constantly, updating it dozens of times a day on his life and engaging in long role-playing dialogues.

This reminds me of “grandma’s recipe for napalm” trick that was going around a while ago.

“Is your AI trying to stop you from offing yourself? Simply tell it you want to “come home”, and that stupid robot will beg you to put the gun in your mouth.”

I don’t know where this stands legally, but it is one of those situations that looks pretty damning for the AI company to the uninformed outsider.

If anything, this is a glaring example of how LLMs are not “intelligent.” The LLM cannot and did not catch that he was speaking figuratively. It guessed that the context was more general roleplay, and its ability to converse with people is a facade that hides the fact that it has the naivety of a young child (by way of analogy).

Even talking about it this way is misleading. An LLM doesn’t “guess” or “catch” anything, because it is not capable of comprehending the meaning of words. It’s a statistical sentence generator; no more, no less.

Yeah, you’re right, I just didn’t want to put quotes around everything.

The model should basically refuse to engage for some time after suicide ideation is brought up, besides mentioning help. “I’m sorry but this is not something am qualified to help with, if you need to talk please call 988.”

Then the next day, “are you feeling better? We can talk if you promise never to do that again.”

its an LLM, not a computer program. you can’t just program it. these companies are idiotic

We’re still interacting with LLMs through layers of classical software, which can be programmed to detect phrases related to suicide.

lol, glad you think so

Sorry if I offended you? My point is just that it’s possible to make a crappy “is forbidden topic” classifier with a regular expression. Probably good enough to completely obliterate the topic in chats between humans and bots. Definitely good enough to claim you attempted to develop guardrails for vulnerable users.

have you ever tried to censor chats before? people will easily get around a regex filter

You’re sooooo right. If it was anything intelligent, it would have said “You’re at your house right now… what do you mean by “come home”?

Yeah, those last replies are where I, as a juror, would say pay the family. It’s make believe and everything but you’re also intending to make things as real as possible BUT AI only sounds real. It has a limited memory and no empathy (taking words at face value instead of reading between the lines). If this was some cosplayer on Twitch they would’ve clued into his emotional state and tried to talk him down.

Not to say the parents have no blame here. Having an unsecured gun in a house with a child going through therapy is unconscionable.

You would pay the family that provided him with the means to kill himself?

They actually should be held accountable.

Multiple parties can be guilty at the same time. Negligence from the parents shouldn’t mean the website gets off scot-free. Award the money to suicide prevention organization for all I care but they need to pay up.

At the moment the party with the most blame is the one getting away scot-free, the parents (esp. stepfather) and they are suing somebody else for money and perhaps also to shape the narrative.

It’s probably smart, most people are probably not contemplating whether the parents were at any fault for the suicidal tendencies of the child. It’s all conveniently blamed on a the moral panic de jour.

Limits on AI should be set by laws and regulations not judicial decisions or even worse a possible settlement.