Last night I was nearly tearing my hair out trying to get a crappy inkjet printer installed when I turned to ChatGPT for help; 5 minutes later it was done (driver issues).

I realised that I use it a lot for all sorts of things but haven’t really seen any posts on here about it, and was wondering if other people do the same.

I’ve used it to create bash scripts, write small, niche python programs (eg sort photos into separate folders based on their orientation), and create cron jobs among other things.

In particular, I find it useful when I want to use a command line program that has a complicated set of options / syntax, but I might only ever want to use it once, or very infrequently. So I tell it what I want to accomplish, and ask it to give me the correct command to run with the options I specify in plain language.

Example:

User

Give me the correct rsync command to recursively copy all files in /home/user/Documents that are image files of any type but were created more recently than 1/1/2024. The destination is /home/user/foo

ChatGPT

rsync -avm --include='*/' --include='*.jpg' --include='*.jpeg' --include='*.png' --include='*.gif' --include='*.bmp' --include='*.tiff' --include='*.svg' --exclude='*' --prune-empty-dirs --min-age=$(date -d '2024-01-01' +%s) /home/user/Documents/ /home/user/foo/

User

Break down the command and explain each part of it in dot point format.

ChatGPT

- rsync`: Invokes the rsync program, which is used for efficiently transferring and synchronizing files across systems or within a system.

-avm:-a: Archive mode, which preserves permissions, symlinks, modification times, group, and ownership. Implicitly includes recursion.-v: Verbose mode, which provides detailed output of what rsync is doing.-m: Prune empty directory chains from the file-list.

--include='*/': Include directories themselves, which is necessary for recursion.--include='*.jpg' --include='*.jpeg' --include='*.png' --include='*.gif' --include='*.bmp' --include='*.tiff' --include='*.svg': Include files that match these image file extensions.--exclude='*': Exclude all other files that do not match the previously stated include patterns.--prune-empty-dirs: Exclude empty directories that result from the inclusion/exclusion pattern.--min-age=$(date -d '2024-01-01' +%s): Exclude files that are older than the timestamp for January 1, 2024. This usesdate -dto convert a date string into a timestamp, whichrsyncuses to filter files./home/user/Documents/: Source directory from which files are being synchronized./home/user/foo/: Destination directory to which files are being synchronized.`

It’s also really good for explaining concepts / commands in plain language.

It’s like having a 24 hour on call Linux expert :)

#Things to note:

- Get the subscription. ChatGPT 3.5 is pretty useless. ChatGPT4 is fine, but I’m pretty sure you need the subscription to access it.

- Give it pre-instructions. I have told mine what distro, what shell I’m using and the make and model of my laptop. If you have a subscription you can add these as permanent pre-instructions, so to speak. That way it will be much more likely to give you correct answers.

- It’s not magic In order to get what you want, you have to be able to ask the right questions. It will boost your ability but won’t turn you in to a 1337 haxx0r

-Ask questions As always, don’t run any commands that you don’t understand. Ask it to break down any commands it tells you to run if you don’t understand them.

-Sometimes it goofs For various reasons, sometimes it will ask you to install a package that no longer exists, or will give you a command that doesn’t work. When that happens, I just feed the error message back into ChatGPT and it usually is able to correct itself.

-Ask “Is there a better or easier way to do this?” This is probably the most valuable question I’ve found to ask chatGPT. Sometimes it gets so far in the weeds looking for a solution to a problem that you need to pull back and start fresh.

Chatgpt does not know truth. It does not know if the info it provides is true. It does not know if the code actually works. It just concatenates strings based on probability. You may be lucky or you aren’t. The easier the task, the more likely it’ll succeed. But a low difficulty is no guarantee for success.

It is great for layouts, structure and for the basic concept. “For loop in fish”. But it may struggle to convert a video from x264 to av1 with ffmpeg. It depends on info that’s provided online. If it uses misinformation, then that’s in there as well.

The command you got is just wrong. What about avif, jxl or most other image formats? Use it, but think.

Note that sometimes Ai models check if code works by executing it. For example gemini can python function and execute it to write down the results

Nice

I hear this over and over but none of what you say actually matters.

It’s not luck if it gives accurate and detailed answers for almost every question that actually compiles and works.

I think the difference in opinion comes down to what you use it for. In some areas I imagine it will just hallucinate. But in others, such as coding, it’s often almost 100% correct and a magic tool for learning and saving soooo much time.

I was wondering how long it would take the gatekeepers to show up. The command works, and is perfectly fine. If I had any uncommon formats, I would tell gpt to include them.

I’m quite sure it won’t be long until some bad practice spreads like this. Giving clueless “Linux pros” top advice on how to enable a back door.

LLMs can be poisoned and as datasets increase and complexity grows it will be harder to contain.

Cgpt works great for some stuff, but all you know is that someone somewhere wrote something similar. They are no better than Google in predicting what is good material and what’s wrong, and training is statistics.

In order to poison a LLM, you’d need access to the training process, which is locked down by openai. Just posting false info on the net isn’t enough. GPT doesn’t simply repeat what’s already been written.

More than that though, you can find plenty of wrong and bad advice posted confidently by legions of Linux gatekeepers on any forum.

Anyone who has ever spent any time on stack overflow will tell you why they’d rather talk to an LLM instead of posting there.

chatgpt only generates text. that’s how it was supposed to work. it doesn’t care if the text it’s generating is true, or if it even makes any sense. so sometimes it will generate untrue statements (with the same confidence as the ‘linux gatekeepers’ you mentioned, except with no comments to correct the response), no matter how well you train it. and if there’s enough wrong information in the dataset, it will start repeating it in the responses, because again, its only real purpose is to pick out the next word in a string based on the training data it got. sometimes it gets things right, sometimes it doesn’t, we can’t just blindly trust it. pointing that out is not gatekeeping.

Example that confirms that “Chatgpt does not know truth. It does not know if the info it provides is true.” or more like “It will spell answer that match your inquiry that sound correct even if it’s totally made up.”

https://chat.openai.com/share/206fd8e9-600c-43f8-95be-cb2888ccd259

Summary:

User in `podman stats` you see BLOCK IO as a summary of hard drive activity. how to reset the ChatGPT To reset the block I/O statistics displayed by podman stats, you can use the podman stats --reset command. User Error: unknown flag: --reset ChatGPT Apologies for the confusion. It seems I provided incorrect information. The podman stats command does not have a built-in option to reset the statistics.So once again, don’t be afraid to use it, but do your own research especially if following LLM could result in something breaking both in tech or in life.

You left out the part where it then gave you the correct answer.

I didn’t left it, I needed provide that “part” to it to get the correct answer.

Because like in the whole thread is mentioned over and over again, chatgpt doesn’t know the correct answer, it’s a mathematical model of “what looks ok” and “what should be the next word”, it looks ok to try to put --reset parameter to reset it, but because chatgpt can’t actually check documentation of podman stats if the param exists, it just generate it based on “common known text patterns”, and “common known text patterns” are written in a way suggesting that it is the truth.

So once again - do your own research if following the results it could cause breaking both in tech and especially in life. And that is true for both chatgpt and random pages on internet.

In this case I did exactly follow chatgpt answer without doing fact checking - I asked chatgpt, I copied the command and pasted it into terminal, because I know that if it didn’t work the worse that could happen it would fail and do nothing. But It’s bad for new people that will not know what the result could be if it’s wrong!

@z00s Don’t take me wrong. I’m not telling not to use it, on the contrary.

You should use any tool that helps you do your job/task. But you should try to understand how to use those tools wisely.

Telling someone never to use ChatGPT is like telling someone to never use excavator. That is wrong, you should use excavator but you should know what is an excavator, and what harm it could do by for example accidentally destroy a building or even hurt someone (or youself) if not use wisely.

Here are some hallucination examples: https://github.com/giuven95/chatgpt-failures

Gatekeeping ain’t bad. It keeps the trash out.

Then why are you here?

Cute

You get what you give

don’t run any commands that you don’t understand. Ask it to break down any commands it tells you to run if you don’t understand them.

You need to pay extra attention to this, as ML models will spit out commands and parameters that doesn’t exists if there was not enough examples in training dataset for that action. Especially with explain as it could just spit out totally wrong but “sounding good” explanation for parameter etc as it not always will tell the magic keywords like “typically” that indicate that it doesn’t have confidence as it’s “based on other similar command/knowledge”.

In your example it spit out:

-m: Prune empty directory chains from the file-list. --prune-empty-dirs: Exclude empty directories that result from the inclusion/exclusion pattern.which is actually exactly the same parameter with 2 different explanations, you can confirm this with

man rsync--prune-empty-dirs, -m prune empty directory chains from file-listSo the more edge case you have the bigger chance it will spill out bad results, but those new models are shockingly good especially for very common use cases.

Absolutely. And I would also add that the more critical the use case, the more work you should do to double check things. Don’t rely on gpt alone if you’re doing critical backups, for example. But if you just want a python program that sorts MP3s, then go ahead and give it a whirl.

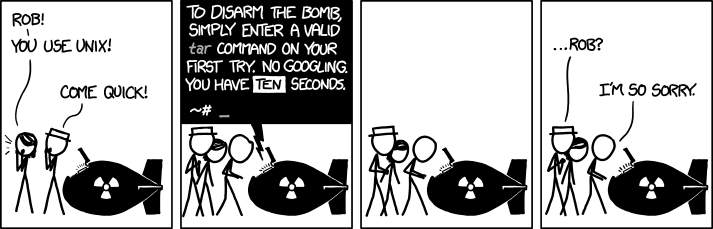

Interesting post, but made me also think of this one : https://xkcd.com/1168/

What I’ve always wondered about that one is: why bother forbidding Google but not ‘man tar’? 🤨

😀 After seeing the comic for the first time I thought that the “UNIX” ™ person simply could have gone for

tar --helportar --versionas valid command to show off their “UNIX” skills and save all.Also surely a lot of people would know tar -Create Ze Vucking File and/or tar -Xtract Ze Vucking File

I interpret “use a valid tar command on your first try” as not allowing to run other commands before the tar command.

Surely the bomb isn’t the only computer in the immediate area.

It says you only have ten seconds, I doubt you could log onto another (Unix) computer in that time, open the terninal, run the man oage and then run over and enter a valid command…

I’m not opposed at all to using LLMs for such purposes, however, please consider a solution that aligns with the values of GNU/Linux and the Free Software Movement. If you have sufficient RAM and a somewhat modern CPU, you can do inference on your very own machine, locally, with no connection to any external servers. And at very respectable speed.

Serious question: Can running locally be as good as ChatGPT-4?

It’s worth doing anyway to get a sense of how computationally intensive it is. Then consider how many people ask for the daily fart joke and you get a sense of the environmental impact.

in terms of the quality of writing you can get models from 20GB at a similar level to GPT-4 (good for creative writing but much worse if knowledge of something is required)

the model I use (~20GB) would know what rclone is but would most likely not know how to use it

EDIT: now that I think about it is was based off of some benchmark. personally I wouldn’t say it performs at GPT-4 but maybe GPT-3.5

Which model is that? I tried several ones that were complete trash, then Mixtrail appeared and starting giving answers that are very basic but mostly factually correct. But none of these are even close to ChatGPT that I can rely on with writing scripts.

Don’t get me wrong, I’d rather not give them my data and money if there was an alternative. But for tech stuff we’re not there yet.

yeah, my bad. edited the comment with more accurate info

and this does apply to creative writing, not knowledgeable stuff like coding

I am actually curious about that, would I need a high powered GPU? I’m running a refurbished Dell Optiplex with a very basic video card that I added

User: “ChatGPT, write me a script to clean up my hard disk on Linux”

ChatGPT: sudo rm -rf / 😁

Squeaky clean 😅

I’m all for it as long as you keep using your brain. Coworker of mine set something upn on AWS that wasn’t working. Going through it I found the error. He said he tried it using chatgpt. He knows how to do it himself, he knows the actual mistake was a mistake, but he trusted Amazon Q when it said the mistake was correct. Even when double checking.

Trust, but verify.

I found it to be a helpful tool in your toolkit. Just like being able to write effective search queries is. Copying scripts off the internet and running them blindly is a bad idea. The same thing holds up for LLMs.

It may seem like it knows what it’s talking about, but it can often talk out of its arse too…

I’ve personally had good results with 3.5 on the free tier. Unless you’re really looking for the latest data

For myself, I’m fine with using ChatGPT and other LLMs (I’ve been experimenting with trying to run them locally, so that I can gain some insight on them a bit better) to “fill in the gaps”, or as a sort of interactable Wikipedia - but I try to avoid asking LLMs something that I have zero knowledge of, because it then makes it a bit more difficult to verify the results it produces.

I love ChatGPT. It’s an invaluable tool. It has helped me solve my problems by pointing me in the right direction significantly faster than any search engine.

Is this as ad?

You could also use free LLMs, check out FMHY.

Removed by mod

Wtf dude did you read the post?

Rude!

Must be fun reading all that source code in your bloated distro.

I’m guessing for you it’s number 3.

The high rate of gatekeeping in the Linux community can be attributed to several factors:

-

Expertise and Complexity: Linux, with its technical complexity and steep learning curve, can foster environments where expertise is highly valued. This can lead to some individuals using their knowledge as a form of power, controlling access to information and resources.

-

Cultural Heritage: The origins of Linux in a niche, technically proficient community have perpetuated a culture that prizes deep knowledge and expertise, sometimes at the expense of inclusivity.

-

Identity and Status: For some, their identity and status within the community are closely tied to their perceived expertise and control over certain aspects of the technology, which can lead to gatekeeping behaviors to protect their standing.

-

Fear of Dilution: There’s often a concern that broadening the community might dilute its values or lower its standards, prompting some members to gatekeep to maintain a perceived purity or quality.

Efforts to address these issues often involve community-led initiatives to foster a more welcoming and inclusive environment, alongside mentorship programs to help newcomers navigate the community more effectively.

I apologise for the language but I am fed up with this shit.

I don’t think promoting OpenAI/Microsoft services is very compatible with the open source/free software ethos.

OpenAI pretend to be a non-profit but are controlled by billionaires and Microsoft. I am not going to take up a subscription and have my personal data mined by a company so I can have the arch wiki and man pages developed with millions of hours of volunteer labour served back to me.

I used to attend Linux/free software conferences decades ago and there would always be that one person who thought Facebook or Google were brilliant and that adding everyone’s lives and personal data to gmail or facebook was totally fine because the APIs were cool and big companies are totally ethical, “Don’t be Evil” etc. I thought they were foolish then and I think time has shown they are even more foolish now.

Every news site and forum I go to, even very non-technical ones, has people appear out of nowhere exclaiming with enthusiasm how OpenAI/copilot solved all their problems in great detail. Whether they are genuine or are just on the hype train created by bots and paid influencers I am at my breaking point with this shit. It is worse than the crypto bros with their NFT monkeys and get rich schemes. It has nothing directly todo with Linux or open source software. I escaped reddit to avoid all the influencers and people peddling shit but it is here as well and people can’t see it for what it is.

Buddy can’t think for themselves and have to generate a response via AI. Pathetic.

You’d be a number 4

You are a number 2, buddy. I’m not referring to your list.

This isn’t even a Linux thing. You AI bros just fucking suck in general.

Lol you certainly earned what they said with this brilliant fucking reply.

-

It’s a pretty helpful tool, but I still prefer running an LLM locally, even though it’ll take a while to answer, then

You can use Copilot or Mistral Chat for pretty much the same. Copilot offers GPT-4 (or 4.5) for free, Mistral Chat is using their own models which sometimes produce better results.

To be honest Microsoft restrictions made copilot extremely ineffective. I asked it to help me disable ssl verification in one of the java’s http clients for testing purposes during development. It said it’s something I never should do and will not give me an answer. ChatGPT restrictions are way more rational than that. Microsoft gutted the tool a lot.

If you haven’t already tried it I would also highly recommend phind.com for troubleshooting or coding questions.

Also for a nice quick access to gpt from your terminal grab “tgpt” and you can ask questions directly from your terminal.

Thanks for the tip!

I’ve found it’s best for things you kinda already know the answer to or at least know what it should look like, it fills in the blanks

Also, for gpt 4 you can get it without the subscription if you do it through the API and use something like gpt-cli (you’re still paying for it but unless you’re talking to it hours on end it’ll end up cheaper that way)

Someone excitedly demonstrated to me how easy it is to code with copilot. They generated a bunch of code easily. And then proceeded to debug subtle bugs for longer than it would have taken to write it yourself in the first place.

And in the end they were still left with badly structured and maintainable code.

LLMs will do exactly what Stackoverflow has done, but more efficiently: allow profileration of bad/outdated solutions to problems, and application of those with no real understanding.

More garbage code and more work for the few people who continue to actually read manuals and understand what they are doing.

Perhaps, but I’m not really suggesting its use for professional programming in this post.

What it is good for is helping with simple stuff like terminal commands, learning python etc. Stuff that has a low risk profile that you’re not relying on for anything too important.