The literal judgement is in on using AI to write speeches

I think one of the big problems is that we, as humans, are very easily fooled by something that can look or sound “alive”. ChatGPT gets a lot of hype, but it’s primarily coming from a form of textual pareidolia.

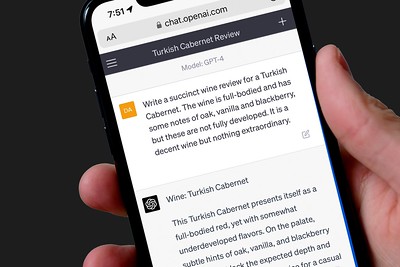

It’s hard to convince people that ChatGPT has absolutely no idea what it’s saying. It’s putting words together in a human-enough way that we assume it has to be thinking and it has to know things, but it can’t do either. It’s not even intended to try to do either. That’s not what it’s for. It takes the rules of speech and a massive amount of data on which word is most likely to follow which other word, and runs with it. It’s a super-advanced version of a cell phone keyboard’s automatic word suggestions. Even just asking it to correct the punctuation on a complex sentence is too much to ask (in my experiment on this matter, it gave me incorrect answers 4 times, until I explicitly told it how it was supposed to treat coordinating conjunctions).

And for most uses, that’s good enough. Tell it to include a few extra rules, depending on what you’re trying to create, and watch it spin out a yarn. But I’ve had several conversations with ChatGPT, and I’ve found it incredibly easy to “break”, in the sense of making it produce things that sound somewhat less human and significantly less rational. What concerns me about ChatGPT isn’t necessarily that it’s going to take my job, but that people believe it’s a rational, thinking, calculating thing. It may be that some part of us is biologically hard-wired to; it’s probably that same part that keeps seeing Jesus on burnt toast.

It is so hard to convince people of this. However much I explain how chatGPT is program to generate text that looks like it was written by a human, people want to believe it can do math and reason things out.

I usually point them to this hilarious video: https://www.youtube.com/watch?v=nUqPOsgu0uo

Yeah, the illusion is quickly dispelled once you spend any time with it. I was trying it out when I was doing worldbuilding for a story I was writing. If you ask it to name 20 towns and describe them it spits out a numbered list. Same with characters. But then I asked it to make the names quirkier and it just used the same names again and described all the towns as quirky. I also asked it to make characters with names that are also adjectives and then describe them. Names like Able, Dusty, Sunny, Major.

The first iteration had a list of names and a description but the description always related to the adjective. Sunny had a sunny disposition and a bright smile. I told it the description should be unrelated to the name and it did the same thing again. I told it to change the name but not the description and it still rewrite the descriptors to match the name but didn’t change the structure.

Nothing I told it could make it move off the idea that a man called sunny must be sunny. It basically can’t be creative or even random when completing tasks.

This is fine when writing dry material that nobody will read but if you want someone to enjoy reading or listening to what is written then the human spark is required.

I just tested the character name thing and it got it on the first try. Maybe GPT-4 just handles it better?

It was gpt-4 I was using. It could be that you wrote it as one instruction and your intensions were very clear from the beginning while I explained it across multiple changes and clarifications when I noticed it wasn’t giving me quite what I wanted.

Part of it is that I was intentionally being very human in my instructions, leaving it open to interpretation and then clarifying or adding things as I brainstormed. Its a messy way of doing it but if AI needs to be able to handle messy instructions in order to be considered on par with people.

Edit: turns out it wasnt gpt-4 I was using i was using the free chat on openais website. I was not aware that they were different.

I’m with Mike Langberg on this:

Intelligent agents are the technology of the future, and always will be.

“Jesus on burnt toast” is probably the most apt comparison I’ve heard on the matter LOL I like your take. ChatGPT (and AI language models in general) are an excellent tool, but at the end of the day, they’re just that - a tool. It’s a tool I’ve already begun implementing in my academics, but keeping its weaknesses in mind. It is QUITE flawed, after all. I feel, like calculators, it’ll fall into a good role alleviating tedium from certain tasks, but the shock value it’s caused in society hasn’t worn off. Now, modern day calculators did actually replace an antiquated job of which the name ‘calculator’ originated from, but I don’t feel ChatGPT is good enough at anything enough to actually replace any specific profession, especially anything that requires more than a basic comprehension in a subject matter. It’s ultimately still just word vomit, and needs close supervision. After all, all I see in the news is how people have used ChatGPT and got in serious trouble over faulty generations (such as ChatGPT making up fake court cases for lawyers). I’ve not seen “ChatGPT found the cure to cancer!” Although I remember seeing one incident where it did actually progress a field some by giving professionals a different way of thinking about how to solve a problem. The flooding of extremely sub-par AI written books flooding the market is reassuring to me that writers aren’t going to get replaced anytime soon, even though I feel that profession is most at risk, if any are.

I think we need to focus on the format it is best at: If you can put a block of text in a hypothetical text webpage, it will generate the rest of that webpage. The generation will make logical sense within context, but must be assisted by prompting step by step “memory” or other methods. Accurate output will require more and more overhead tokens and eventually become an actual job managing. Until then, it’s essentially a pseudointernet webpage without google. You need to trust the output with the same wariness of any random website on the internet.

This is silly.

The article is an anecdote about one incompetent user using a new tool; ChatGPT.

He uses the wrong tool for what he’s trying to accomplish, finding sources. The free version of ChatGPT cannot search the internet and has no internal fact memory as he seems to wrongly assume.

So he, like many others, runs into hallucinations.

Then he jumps to conclusions:

- Our jobs are safe

- Chat GPT doesn’t make mistakes or tell falsehoods – it just gets confused

- He was going to have produce the substance of his keynote address the old fashioned way

How much weight does this assessment or article have?

People who better understand what they can expect from a LLM, and who are willing to invest a tad more time into learning how to use a new tool well, will of course produce better results.

If you want a LLM which can find sources, use a LLM which can find sources. Use the paid ChatGPT 4.0, Bing AI or perplexity.ai.

Like all tools which are used well, they become a productivity multiplier, which naturally means less workforce is required to do the same work. If your job involves text, and you refuse to learn how to use state of the art tools, your job is probably not that safe. Yes, maybe “for the next week or so”, but AI development did not stop, so what does that help. You’re not going to be replaced by AI, but by people who learned how to work with AI.

Here’s a paper on the topic, which comes to vastly different conclusions than this anecdotal opinion piece: GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models

You can upload it to https://www.chatpdf.com/ to get summaries or ask questions.

Calm down, mate, it was a humorous exercise.